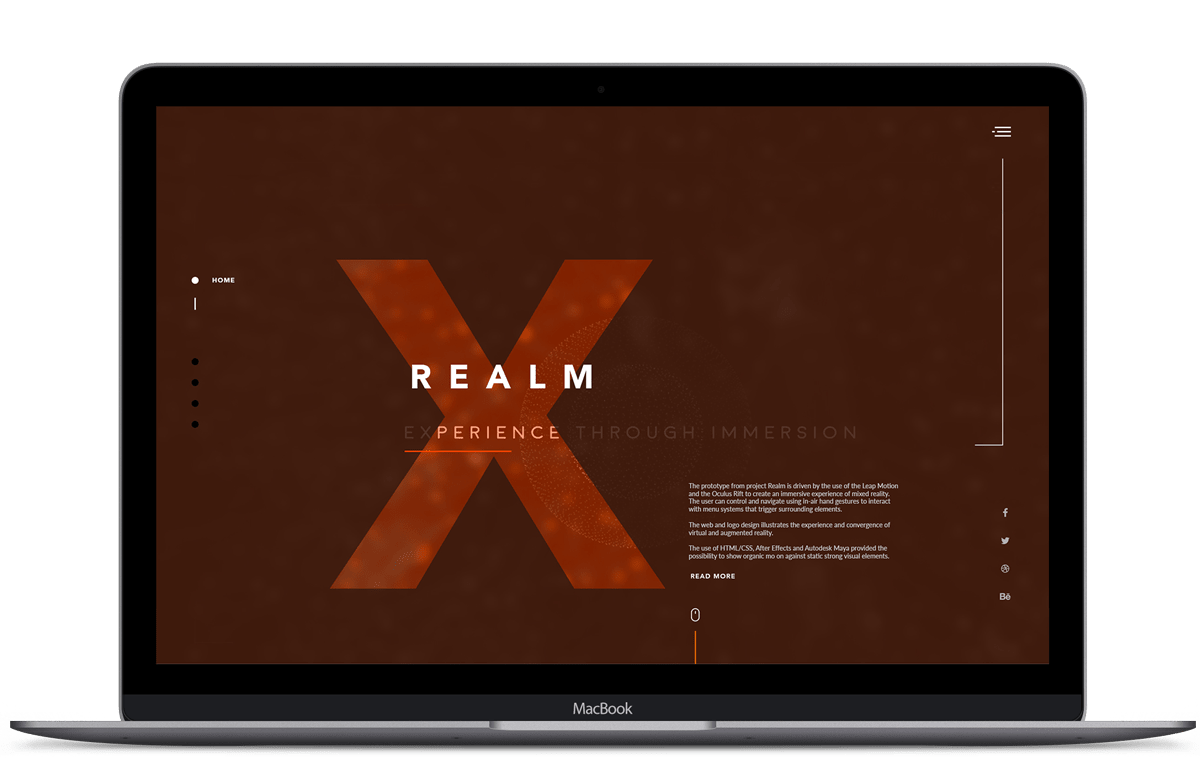

THE STUDY OF NATURAL HAND GESTURAL COMMANDS TO NAVIGATE AND INTERACT WITH IMMERSIVE 3D INTERFACES AND WIDGETS

Role

Abstract

For many years, the main form of digital interaction has been with desktop computers, portable devices like mobile phones and touchscreen tablets. As the new generation of Head Mounted Displays (HMDs) are beginning to make the much-anticipated arrival of augmented reality (AR) and virtual reality (VR).

As technology is progressing, there is an increasing need to study current trends in user and developer communities, and to contextualize them within the ongoing evolution of human-computer interfaces. As these trends in user interfaces (UIs) start to appear, it is also crucial to understand the user interaction behaviors and further analyze what the current flaws are. In this study, we specifically focus on mixed reality (MR) within immersed simulations enabled through combining VR headsets with vision sensors. We present complementing guidelines through an analysis of apps on the Leap Motion market and outline further suggestions for developers and designers to assist with creating more natural user interface experiences.

These suggestions further present the understanding of ergonomics in an immersive space through five zones of interaction, UI compositions through minimal graphical buttons, object manipulation through intuitive gestural interactions with consideration of actual size and reinforcing stronger interaction feedback.

View Thesis

Results

From the study, we observed that there are five different areas in which users respond:

Stretch zone, the extent of which UIs are placed causing the user to go out of their position for successful interaction.

Comfort zone, a safe distance for positive feedback and lack of collision with other objects accidently being triggered. This zone is best explored 0.5 meters away from the headset up till 1 meter.

Curiosity zone, where users notice graphical elements in their peripheral vision that cause them to turn their heads.

Uncommon zone, the position in which is rarely noticed and interacted with that causes redundancy in the system unless there are graphical prompts that extend into the curiosity zone which guide the user’s eye and attention into the uncommon zone.

The last of these is the No zone, which is the distance that should be avoided to cause irritation and blindness to the user’s view through HMDs.

A few participants mentioned their awareness of a 360 environment around them, however during their experience they rarely looked back to interact with widgets and menu systems.

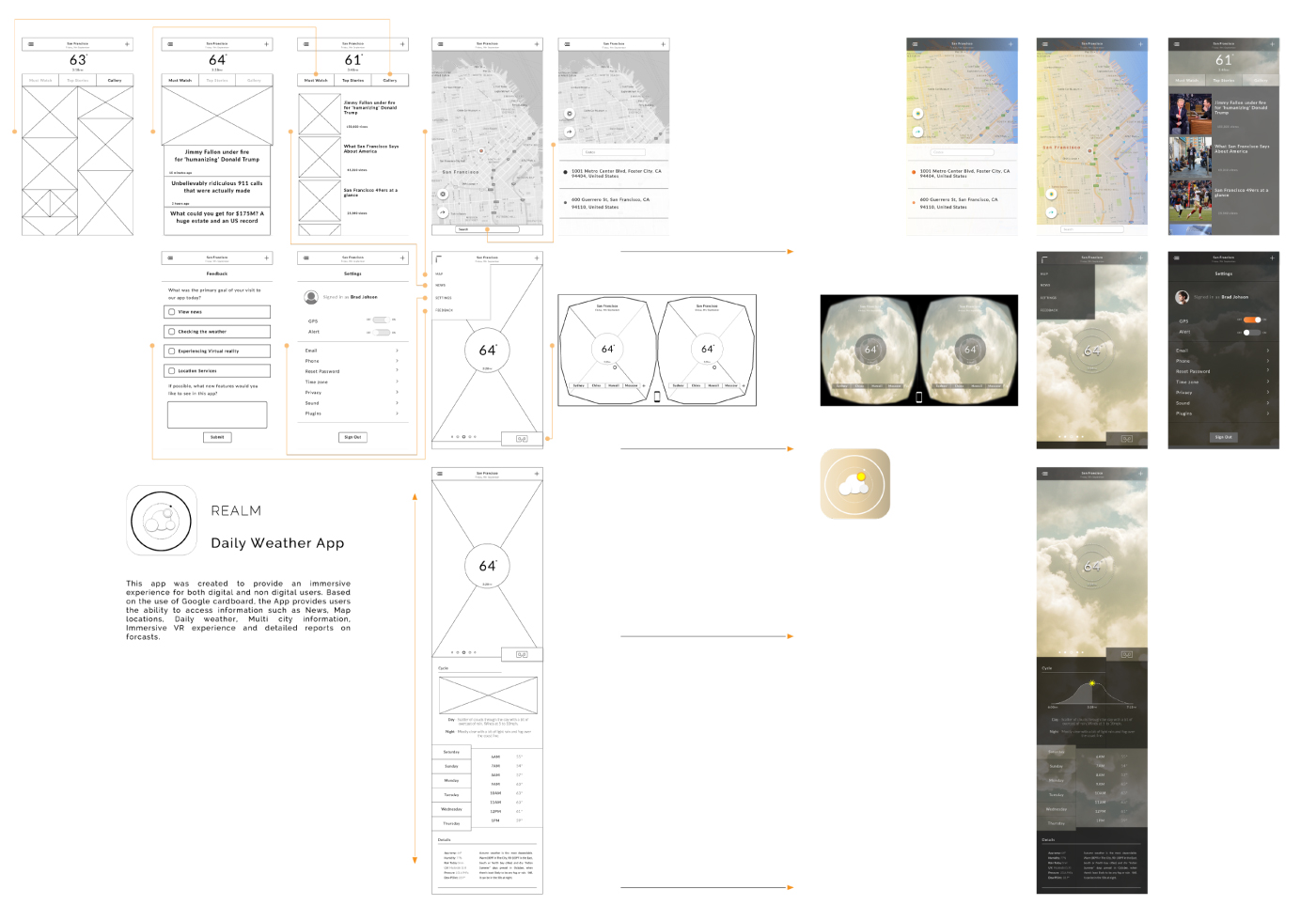

Website

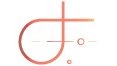

The App

This app was created to provide an immersive experiences for both digital and non digital users. Based on the use of Google cardboard.

The app provides users the ability to access information such as News, Map locations, daily weather, multi city information, an immersive VR experience and detailed reports on forecasts.

Conclusion

The contributions of this research present an in-depth analysis that gathers themes and guidelines from observing characteristics in user interface trends and observing user behaviors within existing applications. These applications are built for the use of the Oculus Rift and Leap Motion sensor which provide users with VR and MR experiences. To assist the results from this research, a prototype of a weather visualization app was designed, implemented and followed the derived guidelines. This was accomplished after phase 3 to show future implementations which can produce more positive experiences for users who are experiencing MR and holographic UIs for the first time.

The results of this study offer new and current themes that may benefit future immersive experiences by reflecting on the proposed guideline principles. By further building on existing principles provided by the Leap Motion community which focus on desktop interaction, it allows this research to get a much deeper insight into user behavior when interacting with user interfaces with in-air gestures within an immersive state. To further evaluate the findings, the app from this research will be submitted to the Leap Motion community as an open-source project for others to develop with for future works. Furthermore, this study presents alternatives and preferred commands for more immediate results, providing a clear idea of what kind of gestural navigational commands would be most appropriate when interacting in the new digital era.

In conclusion by conducting this research, developers and designers can utilize the data and findings to think about what is next in the realm of immersive mixed and virtual realities.